Ensuring Accuracy: Calibrated Spectrometer Assay Verification

Spectrometry is a cornerstone of modern analytical chemistry, providing a powerful lens through which to observe and quantify the elemental and molecular composition of substances. At its heart, a spectrometer relies on the precise interaction of light with matter, a complex dance that yields invaluable data. However, the integrity of this data, and consequently the conclusions drawn from it, hinges entirely on the accuracy of the instrument itself. In the realm of scientific inquiry, accuracy is not a luxury; it is the bedrock upon which reliable knowledge is built. Therefore, the diligent verification of spectrometer assays through meticulous calibration is paramount. This article delves into the critical processes and considerations that underpin accurate spectrometry, exploring how routine calibration and verification procedures act as the guardians of data fidelity.

Understanding the Principles of Spectrometry

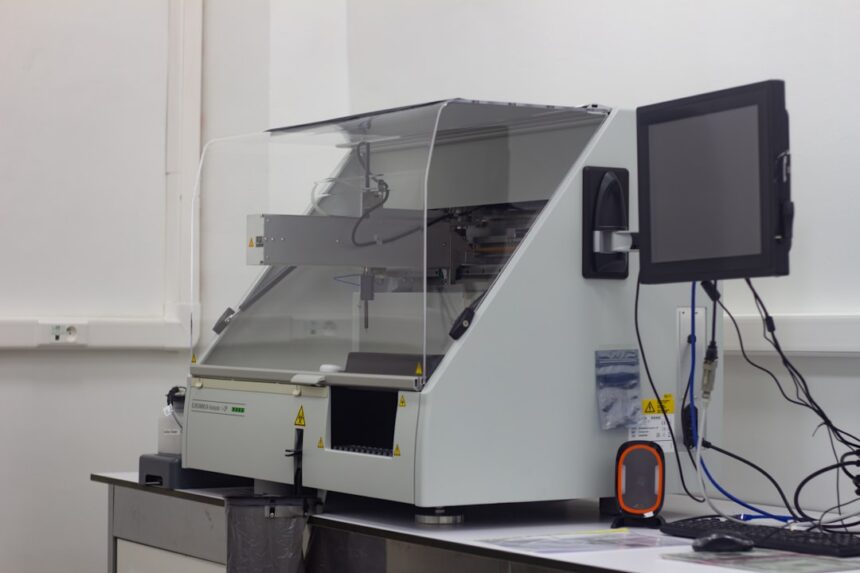

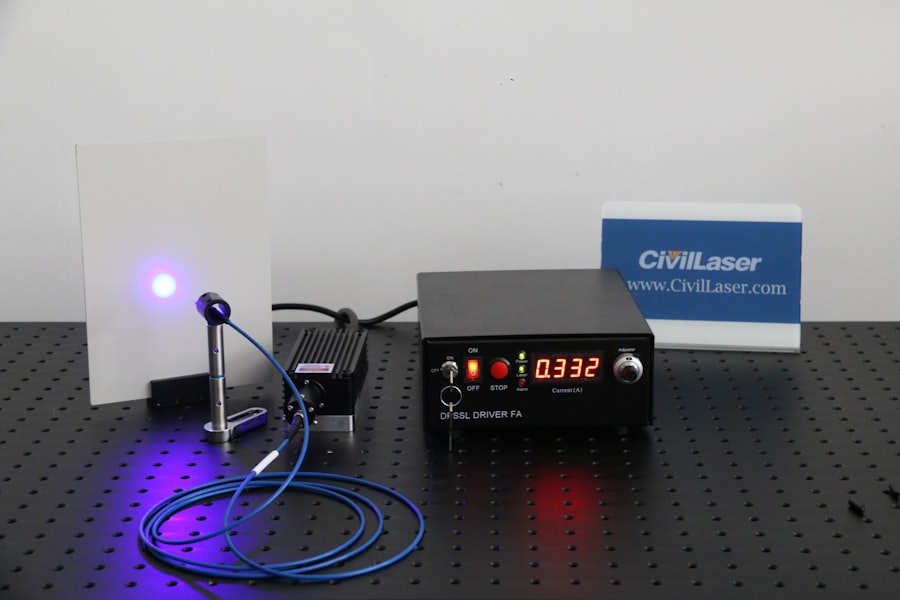

At its fundamental level, spectrometry involves the measurement of the intensity of radiation as a function of its wavelength or frequency. When a sample is exposed to a source of electromagnetic radiation, it can absorb, transmit, or emit photons. The unique spectral “fingerprint” created by this interaction provides information about the sample’s constituents. Different types of spectrometers exist, each tailored to specific regions of the electromagnetic spectrum and the types of analyses they perform. These include ultraviolet-visible (UV-Vis) spectrometers, infrared (IR) spectrometers, atomic emission spectrometers (AES), and mass spectrometers (MS), among others. Regardless of the specific technology, the underlying principle remains the same: the spectrometer acts as a highly sensitive detector, translating subtle interactions with light into quantifiable data. Think of a spectrometer as a highly sophisticated concert hall, where each chemical element or molecule plays its own unique instrument, and the spectrometer meticulously records the notes produced. To ensure these notes are true and not distorted, regular tuning of the instruments is essential.

Key Components of a Spectrometer and Their Roles

A typical spectrometer consists of several crucial components, each playing a vital role in the analytical process.

- Light Source: This component generates the electromagnetic radiation that interacts with the sample. The choice of light source is dependent on the spectral region of interest. For example, a deuterium lamp is common for UV-Vis spectroscopy, while a globar is used for Mid-IR. The stability and spectral output of the light source are critical for consistent measurements.

- Wavelength Selector (Monochromator or Grating): This element is responsible for isolating specific wavelengths of light from the source or separating the light that has interacted with the sample into its constituent wavelengths. This precise selection is fundamental to obtaining a spectrum.

- Sample Compartment: This is where the sample, in its appropriate form (liquid, solid, or gas), is placed for analysis. The design of the sample compartment is crucial for ensuring consistent light path and minimizing interference.

- Detector: This component converts the transmitted or emitted radiation into an electrical signal that can be processed and displayed. Photomultiplier tubes (PMTs), photodiodes, and charge-coupled devices (CCDs) are common types of detectors, each with varying sensitivities and response ranges.

- Signal Processing and Readout System: This encompasses the electronics and software that amplify, digitize, and interpret the signal from the detector, ultimately presenting it as a spectrum or a quantitative value.

In the realm of analytical chemistry, the importance of calibrated spectrometer assay verification cannot be overstated, as it ensures the accuracy and reliability of measurement results. A related article that delves deeper into this topic can be found at In The War Room, where it discusses various methodologies and best practices for maintaining calibration standards in spectroscopic analysis. This resource provides valuable insights for researchers and professionals looking to enhance their assay verification processes.

The Imperative of Calibration: Establishing a Truce with Uncertainty

Why Calibration is Non-Negotiable

In any scientific discipline where measurement is involved, calibration is not simply a good practice; it is a fundamental requirement for drawing credible conclusions. Calibration is the process of comparing the readings of a measuring instrument against a known standard or reference. For a spectrometer, this translates to ensuring that the wavelengths it measures are accurate and that the intensity of the light it detects is representative of the sample’s properties. Without calibration, the spectrometer’s readings are akin to a compass spun wildly by a strong magnet – they may point somewhere, but not necessarily in the correct direction.

Defining Calibration Standards and Their Characteristics

Calibration standards are materials of known and certified properties that are used to adjust or verify the performance of an instrument. For spectrometers, these standards typically fall into two main categories:

- Wavelength Calibration Standards: These are substances or devices that exhibit sharp absorption or emission peaks at precisely known wavelengths. Examples include rare earth element solutions for atomic emission spectrometers, or specific holmium oxide filters for UV-Vis spectrometers. The accuracy of the wavelength calibration directly impacts the identification of analytes. If the spectrometer misidentifies a wavelength, it might incorrectly assign a spectral feature to a particular element or compound, leading to erroneous identification.

- Intensity (or Photometric) Calibration Standards: These are materials or devices designed to verify the accuracy of the spectrometer’s measurement of light intensity or absorbance/transmittance. For UV-Vis, certified neutral density filters with known absorbance values are commonly used. For other spectroscopic techniques, reference solutions with known concentrations of specific analytes are employed. Accurate intensity calibration is crucial for quantitative analysis, ensuring that the measured signal directly and reliably correlates with the concentration of the analyte.

Types of Calibration: From Initial Setup to Ongoing Maintenance

Calibration is not a one-time event but rather a continuous process that adapts to the life cycle of the instrument and the demands of the analytical task.

- Initial Calibration: This is performed when a new spectrometer is installed or after significant maintenance or repair. It establishes the baseline performance of the instrument and ensures it meets its manufactured specifications. This is like the initial factory reset and setup of a new device.

- Periodic Calibration: This is a routine calibration performed at regular intervals, as dictated by the instrument’s manufacturer, laboratory protocols, or regulatory requirements. The frequency of periodic calibration depends on factors such as instrument usage, environmental conditions, and the criticality of the analytical data. Skipping periodic calibration is like neglecting to change the oil in a car; eventually, performance degrades, and potential issues go unnoticed.

- Post-Maintenance Calibration: Whenever a spectrometer undergoes maintenance, repair, or replacement of a key component (e.g., light source, detector), it must be recalibrated. This is to ensure that the repaired or replaced part integrates seamlessly with the rest of the system and that the instrument’s performance is restored to its intended state.

- Calibration Verification: This is a crucial step that distinguishes routine calibration from simply “checking” the instrument. Calibration verification involves using a calibration standard to confirm that the instrument’s current calibration is still valid and within acceptable limits. It’s a quality control measure to ensure the instrument has not drifted since its last full calibration.

Verification Procedures: The Watchful Guardians of Data Integrity

The Role of Verification in the Analytical Workflow

Calibration establishes the instrument’s ability to accurately measure. Verification, on the other hand, is the ongoing process of confirming that the instrument remains accurate during routine use. It’s the daily or weekly patrol that ensures the established accuracy is maintained. Verification procedures act as a safeguard, catching any deviations from the calibrated state before they compromise analytical results. Imagine a meticulously set clock; verification is like glancing at it throughout the day to ensure it’s still keeping perfect time.

Specific Verification Protocols for Spectrometers

The nature of verification protocols can vary depending on the type of spectrometer and the intended application. However, they generally involve analyzing known reference materials or performing specific diagnostic tests.

- Analysis of Certified Reference Materials (CRMs): CRMs are materials with precisely characterized properties, often supplied by accredited organizations. Analyzing CRMs as if they were unknown samples allows for direct comparison of the instrument’s results with the certified values. Deviations outside acceptable tolerance limits indicate a need for recalibration or troubleshooting.

- Running System Suitability Tests (SSTs): SSTs are a set of tests performed before or during a series of sample analyses to demonstrate that the analytical system (including the spectrometer) is performing adequately. These tests assess parameters such as signal-to-noise ratio, peak resolution, and detector linearity. For spectrometers, SSTs might involve analyzing a known standard solution multiple times to assess reproducibility or measuring a blank solution to check for background interference.

- Monitoring Instrument Performance Trends: Experienced laboratory personnel often track key performance indicators of the spectrometer over time. This can include logging the measured absorbance of a specific reference material or monitoring noise levels. Deviations from established trends can be early indicators of potential instrument problems that may require attention before they escalate and lead to inaccurate results. This proactive approach is like a doctor monitoring a patient’s vital signs to detect subtle changes that could signal an impending illness.

Establishing Acceptance Criteria and Tolerance Limits

A critical component of any verification procedure is the definition of clear acceptance criteria. These are the predefined limits within which the instrument’s performance must fall for the analytical results to be considered valid.

- Defining Tolerance Limits: Tolerance limits, or acceptable ranges, are established based on the precision and accuracy requirements of the analytical method, regulatory guidelines, and the inherent variability of the instrument and the standards used. For example, a wavelength accuracy might be specified as ±0.5 nm, while an absorbance accuracy might be ±2% of the certified value.

- The Consequence of Exceeding Limits: When verification results fall outside the established acceptance criteria, it signifies that the spectrometer’s performance has drifted beyond acceptable levels. In such instances, the analytical data generated since the last successful verification (or calibration) is considered questionable and may need to be reanalyzed. This is the moment where you realize the compass has gone rogue, and all directions determined since it started spinning erratically are suspect.

Mastering the Art of Wavelength and Intensity Verification

Wavelength Verification: Ensuring the Spectrometer Sees the “Right” Colors

The accurate identification of analytes in spectroscopy is predicated on the precise measurement of wavelengths. A spectrometer that is not correctly calibrated in terms of wavelength may misidentify elements or compounds, leading to false positives or negatives.

Methods for Wavelength Verification

Several methods are employed to verify the wavelength accuracy of a spectrometer:

- Using Absorption Bands of Known Standards: For UV-Vis and IR spectrometers, specific metallic or organic compounds with sharp and well-defined absorption bands at known wavelengths are used. For example, holmium oxide filters are widely used for UV-Vis wavelength verification due to their characteristic absorption peaks in the visible region.

- Emission Lines of Gas Discharge Lamps: Atomic emission spectrometers often utilize the well-characterized emission lines of specific elements present in gas discharge lamps (e.g., mercury or neon lamps). The spectrometer is used to measure the wavelengths of these emission lines and compare them to their certified values.

- Calibration Using Internal Wavelength Calibration Wheels or Filters: Some modern spectrometers are equipped with internal calibration wheels or filters that contain materials with known spectral features, allowing for rapid and automated wavelength checks.

Factors Influencing Wavelength Accuracy

Several factors can influence the wavelength accuracy of a spectrometer and necessitate regular verification:

- Temperature Fluctuations: Changes in ambient temperature can affect the optical components of the spectrometer, leading to slight shifts in wavelength calibration.

- Mechanical Stress: Physical impacts or vibrations can misalign optical elements, impacting wavelength accuracy.

- Aging of Light Source or Detectors: Over time, the spectral output of a light source can change, and detector sensitivity can vary, potentially affecting wavelength assignments.

Intensity Verification: Quantifying the “Amount” of Light

While wavelength accuracy is crucial for qualitative analysis (identification), intensity accuracy is paramount for quantitative analysis (measurement of concentration). It ensures that the measured amount of light directly corresponds to the amount of analyte present.

Methods for Intensity Verification

Intensity verification typically involves assessing parameters related to the absorbance, transmittance, or emission intensity of the instrument.

- Using Neutral Density Filters: For UV-Vis and other absorption-based spectrometers, certified neutral density filters with known and precise absorbance values are used at specific wavelengths. The spectrometer’s measured absorbance of these filters is compared to their certified values.

- Analyzing Standard Solutions of Known Concentration: For many spectroscopic techniques, preparing and analyzing standard solutions of known analyte concentrations is the most direct method for intensity verification. The measured signal (e.g., absorbance, emission intensity) is compared to the expected signal based on the standard’s concentration and the Beer-Lambert Law (for absorption spectroscopy).

- Assessing Detector Linearity and Sensitivity: Verification procedures may also include tests to ensure that the detector responds linearly to varying light intensities and that its sensitivity remains within acceptable parameters. This can involve analyzing samples with a wide range of concentrations or analyzing the same sample multiple times to assess reproducibility at different signal levels.

The Impact of Intensity Deviations on Quantitative Results

Deviations in intensity calibration can have significant consequences for quantitative analysis:

- Underestimation or Overestimation of Concentration: If the instrument consistently reads lower or higher than the true intensity, it will lead to an underestimation or overestimation of analyte concentrations in the samples. This can have critical implications in fields like pharmaceutical quality control or environmental monitoring.

- Compromised Validation Studies: Inaccurate intensity measurements can invalidate method validation studies, rendering the analytical method unreliable for its intended purpose.

- Incorrect Dosage or Treatment Decisions: In clinical diagnostics or therapeutic drug monitoring, inaccurate quantitative results can lead to incorrect dosages or treatment plans, posing a risk to patient health.

Calibrated spectrometer assay verification is crucial for ensuring the accuracy and reliability of analytical results in various scientific fields. A related article that delves deeper into the methodologies and best practices for assay verification can be found at this link. By exploring this resource, readers can gain valuable insights into the importance of calibration and the impact it has on the integrity of spectroscopic measurements.

Documentation and Traceability: The Trail of Evidence

| Metric | Description | Typical Value/Range | Unit | Acceptance Criteria |

|---|---|---|---|---|

| Wavelength Accuracy | Difference between measured and true wavelength | ±0.5 | nm | ±1 nm or better |

| Photometric Accuracy | Difference between measured and known absorbance | ±0.01 | Absorbance Units (AU) | ±0.02 AU or better |

| Linearity | Correlation coefficient of absorbance vs concentration | 0.999 | R² | ≥0.995 |

| Limit of Detection (LOD) | Lowest concentration reliably detected | 0.1 – 1.0 | µM | As per assay requirements |

| Repeatability | Standard deviation of repeated measurements | ≤0.005 | Absorbance Units (AU) | ≤0.01 AU |

| Signal-to-Noise Ratio (SNR) | Ratio of signal amplitude to noise level | >100 | Unitless | >50 |

| Baseline Stability | Drift in baseline absorbance over time | ±0.001 | Absorbance Units (AU) | ±0.005 AU over 1 hour |

The Importance of Comprehensive Record-Keeping

In scientific endeavors, the adage “if it wasn’t documented, it didn’t happen” holds particular weight. For spectrometer assay verification, meticulous record-keeping is not just about meeting regulatory requirements; it’s about establishing a robust audit trail, enabling troubleshooting, and ensuring reproducibility.

What to Document for Each Verification Event

Every calibration and verification event should be thoroughly documented. Key information to record includes:

- Date and Time of Calibration/Verification: Precise timestamps are essential for tracking performance over time.

- Instrument Identification: Unique identifiers for the spectrometer, including its serial number.

- Standards Used: Details of the calibration standards, including their lot numbers, expiration dates, and certified values. This ensures that only validated and traceable standards are employed.

- Results of the Verification: All raw data, calculations, and the final outcome (pass/fail) of the verification procedure. This includes recorded measurements and any deviations from acceptance criteria.

- Operator Performing the Verification: The identity of the individual responsible for conducting the calibration or verification. This allows for accountability and helps in identifying potential training needs.

- Any Adjustments or Actions Taken: If adjustments were made to the instrument during calibration, or if troubleshooting was required, these actions must be meticulously documented.

- Next Calibration/Verification Due Date: Clearly marking the schedule for future maintenance ensures that the process remains continuous.

Maintaining Traceability to National and International Standards

Traceability is the ability to relate the result of a measurement to a stated reference through an unbroken chain of comparisons, all having stated uncertainties. For spectrometer assays, this means ensuring that the calibration standards used can be traced back to authoritative national or international metrology organizations, such as the National Institute of Standards and Technology (NIST) in the United States or the Bureau International des Poids et Mesures (BIPM) internationally.

The Chain of Traceability

The chain of traceability for a spectrometer’s calibration might look like this:

- The Spectrometer: Measures an unknown sample.

- Calibration Standard: The spectrometer is calibrated using a standard with a certified value.

- Manufacturer of the Standard: The standard’s value is certified by its manufacturer.

- Metrology Institute: The manufacturer’s certification is traceable to a higher-level reference material or measurement performed by a national metrology institute (NMI).

- Fundamental Standards or Physical Constants: Ultimately, the NMI’s measurements are traceable to internationally recognized units of measurement and fundamental physical constants.

Why Traceability Matters in Scientific Practice

Traceability is more than just a bureaucratic requirement; it is a critical aspect of good scientific practice for several reasons:

- Ensuring Interoperability and Comparability: Traceable measurements allow for the comparison of results obtained from different laboratories, using different instruments, at different times. This is crucial for collaborative research, regulatory compliance, and global trade.

- Confidence in Results: When results are traceable, it instills confidence in their accuracy and reliability, both within the laboratory and among external stakeholders, such as regulatory bodies, customers, or the scientific community.

- Dispute Resolution: In the event of a disagreement or dispute over analytical results, a robust and documented chain of traceability can serve as a critical piece of evidence to resolve the issue.

Embracing a Culture of Quality: Beyond Compliance

The Spectrometer as a Tool of Precision, Not Magic

It is vital to view the spectrometer not as a magical black box that dispenses answers, but as a sophisticated tool that requires diligent understanding and care. Its precision is a direct consequence of the care taken in its operation and maintenance. Accurate assay verification is not merely about ticking boxes; it is about fostering a deep-seated commitment to accuracy within the laboratory environment.

Proactive Maintenance and Troubleshooting: Preventing Problems Before They Arise

A proactive approach to instrument maintenance and troubleshooting is exponentially more effective than reacting to failures.

- Regular Preventative Maintenance: Adhering to the manufacturer’s recommended preventative maintenance schedule is essential. This can include cleaning optical components, checking seals, and verifying the performance of consumables.

- Training and Competency of Personnel: Ensuring that all personnel operating the spectrometer are adequately trained and competent is fundamental. They should understand the instrument’s operating principles, calibration procedures, and troubleshooting steps.

- Establishing a Robust Troubleshooting Protocol: Having a well-defined protocol for troubleshooting instrument issues allows for systematic investigation and efficient resolution of problems. This often involves a step-by-step process of isolating potential causes and testing hypotheses.

The Broader Implications for Scientific Integrity and Public Trust

The accuracy of spectrometer assays has far-reaching implications that extend beyond the laboratory walls. In fields such as environmental monitoring, food safety, and pharmaceutical quality control, inaccurate results can lead to significant public health risks, economic losses, and erosion of public trust. Therefore, the rigorous calibration and verification of spectrometers are not just technical procedures; they are fundamental to upholding scientific integrity and safeguarding public well-being. The consistent, accurate data generated by a well-maintained and verified spectrometer acts as a lighthouse, guiding decisions and ensuring safety in a complex world. Without this reliable guiding light, we risk navigating uncertain waters with misleading information.

FAQs

What is a calibrated spectrometer?

A calibrated spectrometer is an optical instrument that has been adjusted and verified against known standards to ensure accurate measurement of light spectra. Calibration ensures the device provides precise wavelength and intensity readings.

Why is assay verification important in spectrometry?

Assay verification confirms that the spectrometer and associated methods produce reliable and reproducible results for specific assays. It ensures the accuracy and validity of quantitative measurements in analytical applications.

How is a spectrometer calibrated for assay verification?

Calibration typically involves using reference materials or standards with known spectral properties. The spectrometer’s response is adjusted to match these standards, correcting for wavelength accuracy, intensity response, and instrument linearity before performing assay verification.

What are common applications of calibrated spectrometer assay verification?

Calibrated spectrometer assay verification is commonly used in pharmaceutical analysis, environmental testing, food quality control, and chemical research to ensure precise quantification of substances and compliance with regulatory standards.

How often should a spectrometer be calibrated and verified?

The frequency of calibration and assay verification depends on the instrument’s usage, manufacturer recommendations, and regulatory requirements. Typically, calibration is performed periodically (e.g., monthly or quarterly), and assay verification is conducted whenever a new assay is developed or significant maintenance occurs.